Unique Technology for Automation in the Most Demanding Conditions

Our Edge in Harsh-Weather Autonomy

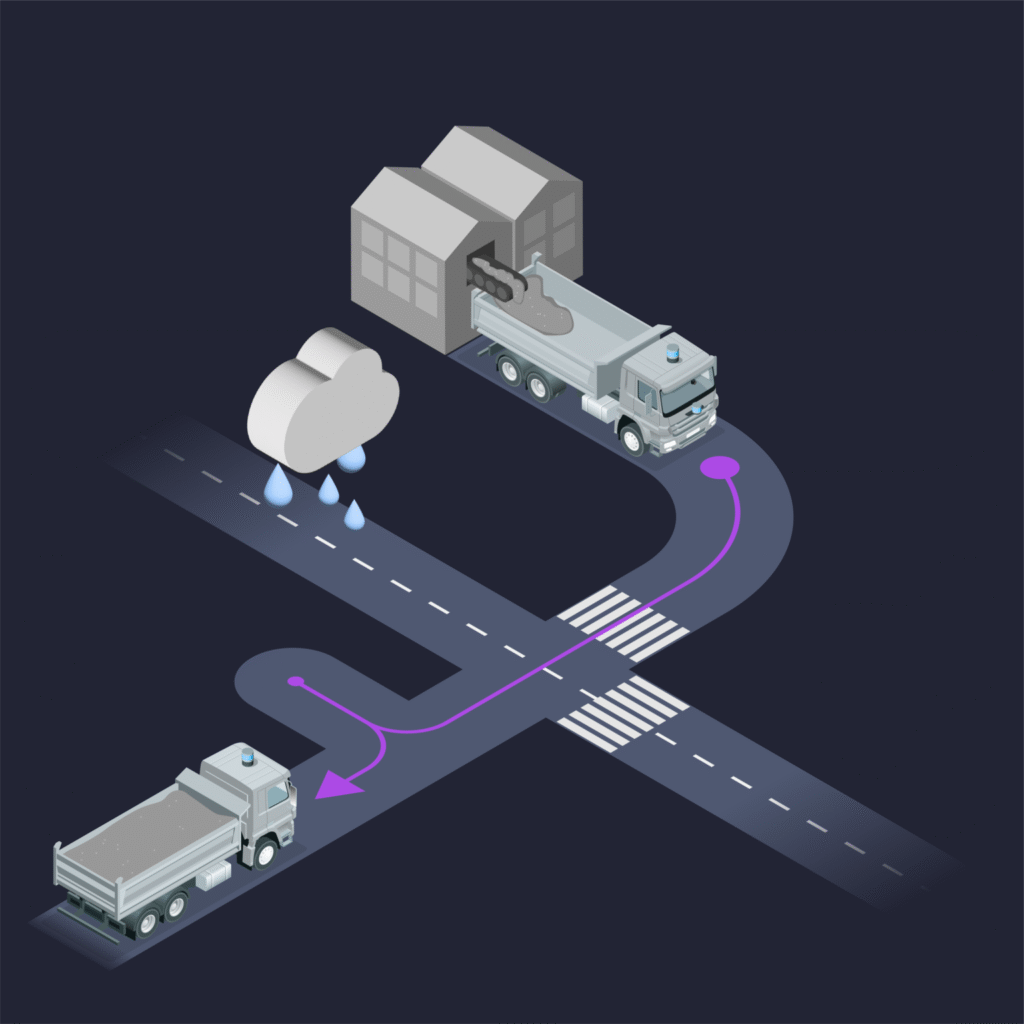

Most autonomous driving systems are designed for fair weather. Sensible 4’s technology is engineered for the real world — where rain, snow, fog, ice, dust, and extreme temperatures are the norm.

Advanced Sensor Fusion

Fuses LiDAR, radar, cameras, GNSS, and IMU data to deliver robust perception and positioning, even in zero-visibility conditions.

Proprietary Localization

Functions without lane markings, using high-definition maps, AI models, and GNSS/IMU corrections for reliable positioning.

AI-Driven Decision Making

Continuously learns and adapts, enhancing safety, efficiency, and route optimization in dynamic environments.

Redundant Safety Systems

Built to meet the strict demands of industrial, defense, and closed-site operations, ensuring fail-safe performance.

Low-Cost, Scalable Deployment

Infrastructure-light design eliminates the need for expensive wireless networks or roadside systems, enabling faster, more affordable fleet rollouts.

Tested in the Toughest Conditions

Our technology has been proven in the world’s most extreme environments:

- Arctic Winters – Reliable performance in snowstorms and temperatures down to –40 °C.

- Tropical Climates – Resilient against heavy rain, heat, and high humidity.

- Desert Operations – Robust in dusty, hot, and low-visibility conditions.

Core Capabilities

Advanced Sensor Fusion

LiDAR, radar, camera, GNSS, and IMU provide a reliable 360° perception layer.

1

Proprietary Localization

Functions even without lane markings, using high-definition maps, AI models, and GNSS/IMU corrections.

2

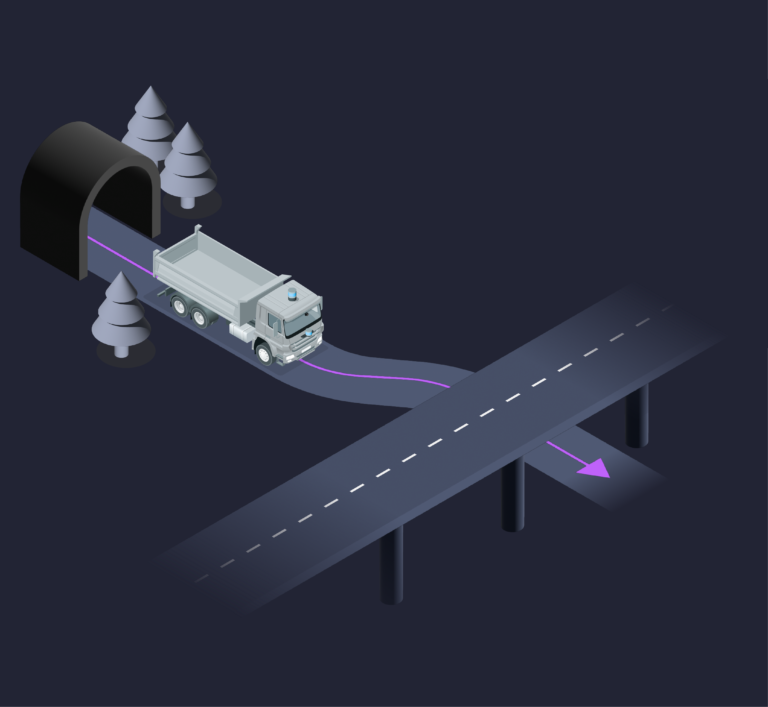

Any-Space Autonomy

Real-time mapping enables seamless operation in GNSS-denied, indoor, outdoor, and hybrid environments.

3

AI-Driven Decision Making

Continuously learns and adapts, optimizing routes, safety, and efficiency.

4

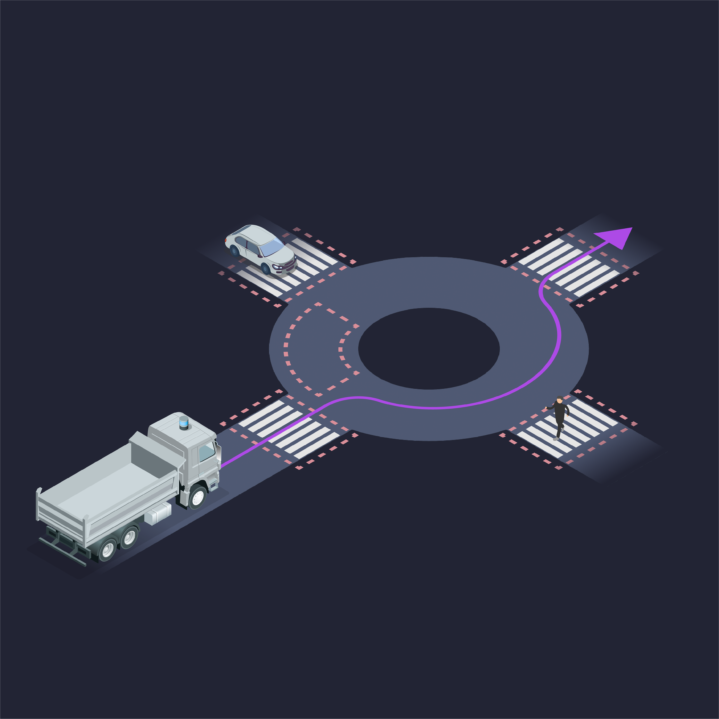

Redundant Safety Systems

Built for industrial, defense, and mixed-traffic environments to ensure fail-safe operation.

5

How DAWN Works

DAWN is a full-stack retrofit autonomy platform that transforms existing vehicles into autonomous systems without costly infrastructure.

Deployment Options

- Autonomous Haulage – For mining, construction, and industrial material transport.

- DAWN for TeleOps – Available as a standalone remote operations console or integrated with DAWN autonomy for supervision and exception handling.

- Defense Mobility – For convoy operations, tactical logistics, and GPS-denied scenarios.

- Mixed-Traffic Ready – Scales from closed industrial zones to public roads for future transport networks.

Retrofit Hardware Kit

Alongside DAWN software, we provide a robust retrofit hardware kit designed for industrial-grade deployment:

Core Capabilities

Integrated Autonomy Stack

Pre-packaged with sensors, compute, and safety systems for seamless installation.

1

Drive-by-Wire (DbW) Components

Custom-designed to interface reliably with a wide range of vehicle platforms.

2

Ruggedized & Modular

Built for harsh environments, with flexible design enabling quick installation and maintenance.

3

End-to-End Solution

Hardware, autonomy software, and TeleOps console delivered as one cohesive retrofit package.

4

DAWN for TeleOps

Not all machines can (or should) be fully autonomous — but they can still benefit from remote operation. DAWN for TeleOps provides a standalone remote operations console for supervised and manual control of heavy equipment.

- Standalone or Integrated – Works independently or as part of the DAWN autonomy platform.

- Wider Applicability – Enables remote operation for non-autonomous platforms such as excavators, reach stackers, cranes, and specialized machinery.

- Enhanced Safety – Keeps operators out of hazardous zones while maintaining full manual control capability.

- Operational Flexibility – Switch seamlessly between autonomy and teleoperation when required.